Numerical Methods for the STEM Undergraduate

% Program to get the quadrature points

% and weight for Gauss-Legendre Quadrature

% Rule

clc

clear all

syms x

% Input n: Quad pt rule

n=14;

% Calculating the Pn(x)

% Legendre Polynomial

% Using recursive relationship

% P(order of polynomial, value of x)

% P(0,x)=1; P(1,x)=0;

% (i+1)*P(i+1,x)=(2*i+1)*x*P(i,x)-i*P(i-1,x)

m=n-1;

P0=1;

P1=x;

for i=1:1:m

Pn=((2.0*i+1)*x*P1-i*P0)/(i+1.0);

P0=P1;

P1=Pn;

end

if n==1

Pn=P1;

end

Pn=expand(Pn);

quadpts=solve(vpa(Pn,32));

quadpts=sort(quadpts);

% Finding the weights

% Formula for weights is given at

% http://mathworld.wolfram.com/Legendre-GaussQuadrature.html

% Equation (13)

for k=1:1:n

P0=1;

P1=x;

m=n;

% Calculating P(n+1,x)

for i=1:1:m

Pn=((2.0*i+1)*x*P1-i*P0)/(i+1.0);

P0=P1;

P1=Pn;

end

Pn=P1;

weights(k)=vpa(2*(1-quadpts(k)^2)/(n+1)^2/ …

subs(Pn,x,quadpts(k))^2,32);

end

fprintf(‘Quad point rule for n=%g \n’,n)

disp(‘ ‘)

disp(‘Abscissas’)

disp(quadpts)

disp(‘ ‘)

disp(‘Weights’)

disp(weights’)_______________________________________________________

In a short 2.5 years since starting the numericalmethodsguy YouTube channel in January 2009, this month the channel crossed the benchmark of receiving 1 million video views. Currently the channel gets between 2,500-3,500 video views per day. Although we have playlists on the channel, the playlist for all the available topics are given on single webpage at http://nm.mathforcollege.com/videos/index.html

Complete resources on each topic of available numerical methods including textbook chapters, videos, multiple-choice tests, PPTs, and worksheets are given at http://nm.mathforcollege.com/topics/textbook_index.html

__________________________________________________

This post is brought to you by Holistic Numerical Methods: Numerical Methods for the STEM undergraduate at http://nm.mathforcollege.com, the textbook on Numerical Methods with Applications available from the lulu storefront, the textbook on Introduction to Programming Concepts Using MATLAB, and the YouTube video lectures available at http://nm.mathforcollege.com/videos. Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

This post is brought to you by

Holistic Numerical Methods: Numerical Methods for the STEM undergraduate at http://nm.mathforcollege.com, the textbook on Numerical Methods with Applications available from the lulu storefront, the textbook on Introduction to Programming Concepts Using MATLAB, and the YouTube video lectures available at http://nm.mathforcollege.com/videos. Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

Reference: Floating Point Representation

This post is brought to you by

Holistic Numerical Methods: Numerical Methods for the STEM undergraduate at http://nm.mathforcollege.com, the textbook on Numerical Methods with Applications available from the lulu storefront, the textbook on Introduction to Programming Concepts Using MATLAB, and the YouTube video lectures available at http://nm.mathforcollege.com/videos. Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

Reference: Approximation of First Derivatives by Finite Difference Approximations

This post is brought to you by

Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

The Taylor series for a function f(x) of one variable x is given by

f(x+h) = f(x) + f '(x)h +f ''(x) h^2/2!+ f ''' (x)h^3/3! + .............

What does this mean in plain English?

As Archimedes would have said (without the fine print), “Give me the value of the function at a single point, and the value of all (first, second, and so on) its derivatives, and I can give you the value of the function at any other point”.

It is very important to note that the Taylor series is not asking for the expression of the function and its derivatives, just the value of the function and its derivatives at a single point.

Now the fine print: Yes, all the derivatives have to exist and be continuous between x (the point where you are) to the point, x+h where you are wanting to calculate the function at. However, if you want to calculate the function approximately by using the n^{th} order Taylor polynomial, then 1^{st}, 2^{nd} ……., n^{th} derivatives need to exist and be continuous in the closed interval [x, x+h] , while the n+1^{th} derivative needs to exist and be continuous in the open interval (x, x+h).

Reference: Taylor Series Revisited

This post is brought to you by

Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

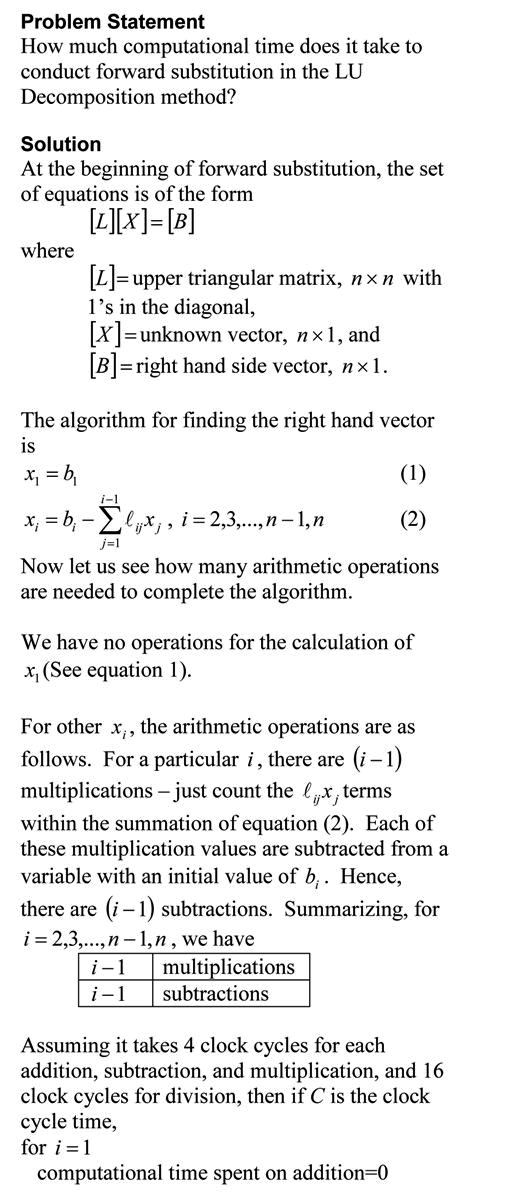

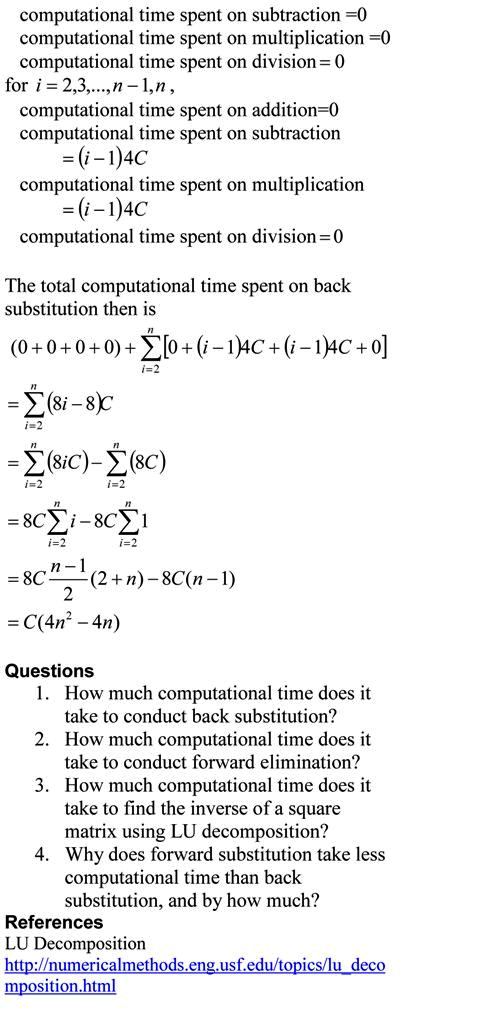

In the previous blog, we found the computatational time for back substitution. This is a blog that will show you how we can find the approximate time it takes to conduct forward substitution, while solving simultaneous linear equations. The blog assumes a AMD-K7 2.0GHz chip that uses 4 clock cycles for addition, subtraction and multiplication, while 16 clock cycles for division. Note that we are making reasonable approximations in this blog. Our main motto is to see what the computational time is proportional to – does the computational time double or quadruple if the number of equations is doubled.

The pdf file of the solution is also available.

This post is brought to you by

Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

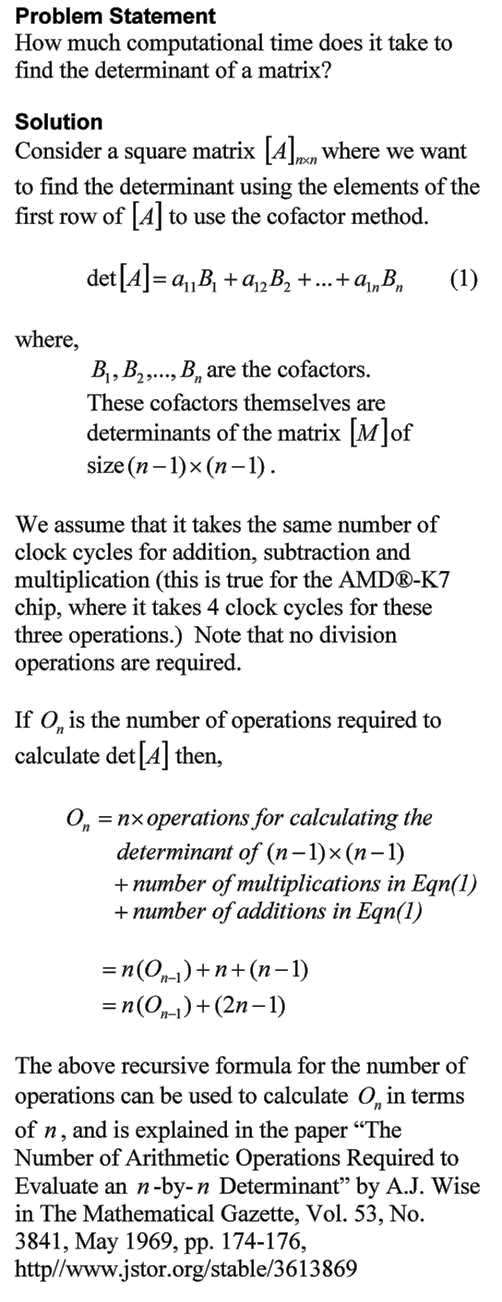

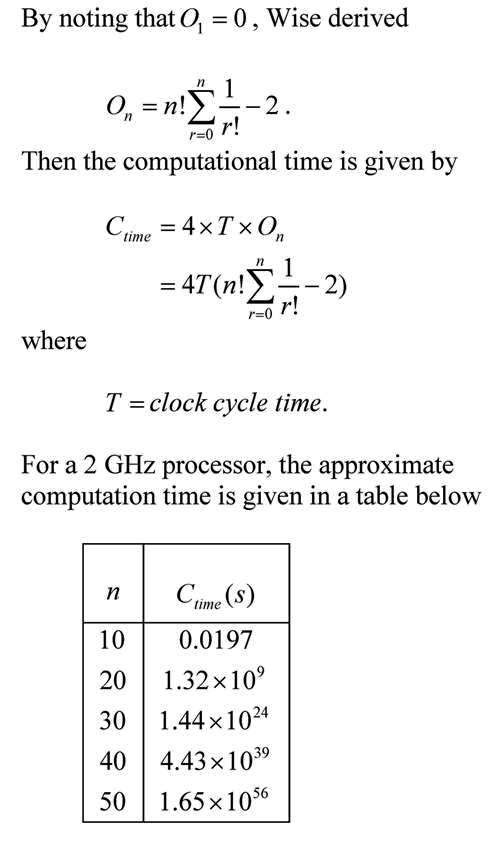

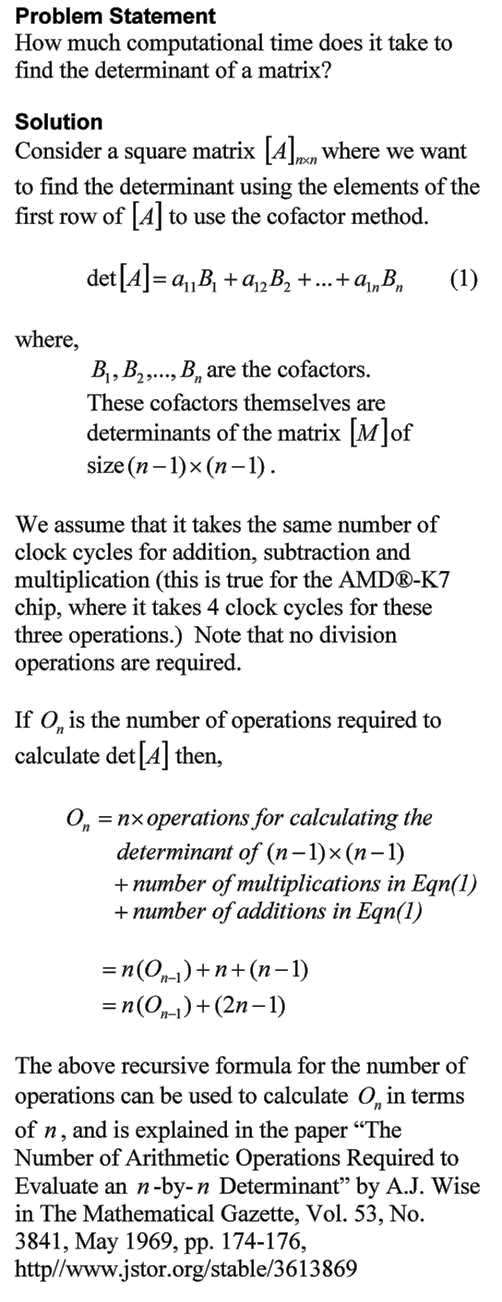

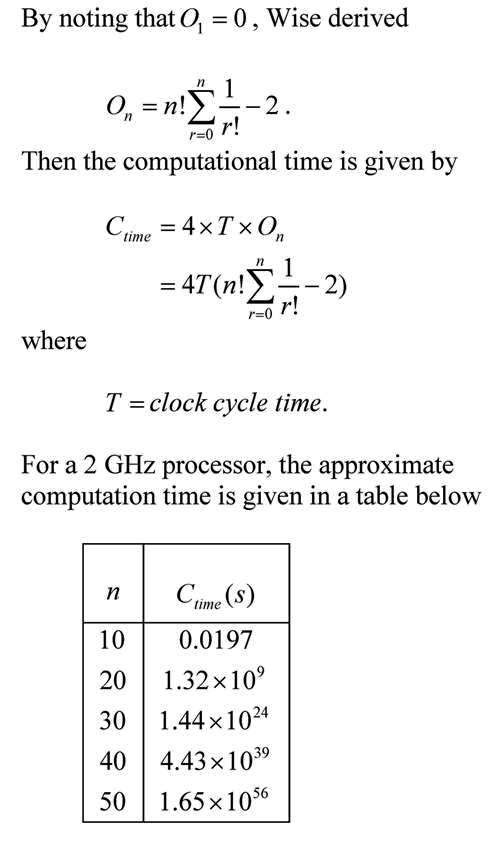

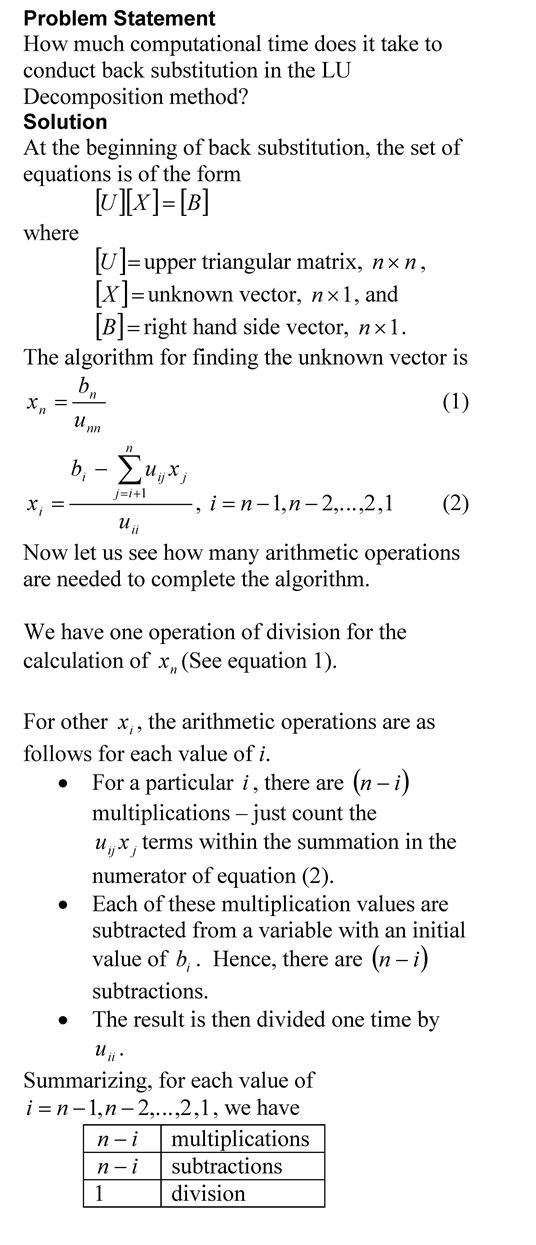

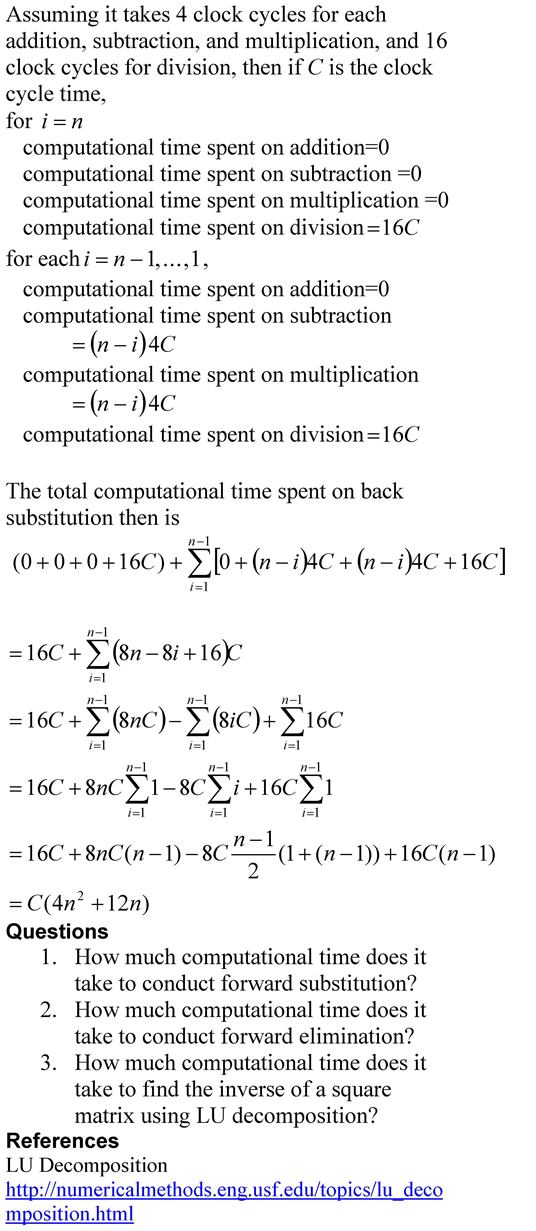

This is a blog that will show you how we can find the approximate time it takes to conduct back substitution, while solving simultaneous linear equations using Gaussian elimination method. The blog assumes a AMD-K7 2.0GHz chip that uses 4 clock cycles for addition, subtraction and multiplication, and 16 clock cycles for division. Note that we are making reasonable approximations in this blog. Our main motto is to find how the computational time is related to the number of equations.

The pdf file of the solution is also available.

This post is brought to you by

Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.