A Metric to Quantify the Topsy-Turvyness (Wildness) of a College Football Season

The 2008 college football season is almost here, and news media, sports commentators, and bloggers will hope for something to hype about. Luckily for them, the 2007 season did give them something to talk about; you would be hard pressed to recall a more topsy-turvy season. Ranked teams regularly lost to low-ranked and unranked teams.

In just Week#1 of the 2007 season, Associated Press (AP) No. 5 team University of Michigan lost to an unranked Division II team – Appalachian State. The Associated Press wasted no time in booting out Michigan out of the Top AP 25. Two weeks later, No. 11 UCLA lost to unranked Utah by a wide margin of 44-6. UCLA also met the same fate as Michigan; UCLA was dropped from the AP Top 25.

The topsy-turvyness continued in the season, especially for No. 2 ranked teams. The University of South Florida, where I work, was ranked No. 2 when they lost to unranked Rutgers 30-27 in Week#8. This was the same week when three other teams (South Carolina, Kentucky, and California) ranked in the Top 10 of the AP poll also lost their games.

To top off the season, for the first time in history of the Bowl Championship Series (BCS), the title bowl game had a team (Louisiana State University (LSU)) with two regular season losses, and LSU ended up winning the national championship.

Although many ranted and raved about the anecdotal evidence of a topsy-turvy season, is it possible that the media and fans over exaggerated the topsy-turvyness of the 2007 college football season. Were there other seasons that were more topsy-turvy than 2007?

To answer this question scientifically, this article proposes an algorithm to quantify the topsy-turvyness of the college football season. The author does not know of any previous literature that has attempted to develop a metric that quantifies the topsy-turvyness of any sport, which is ranked regularly in the season.

The TT factor

The Topsy-Turvy Factor (TT factor) is a metric that quantifies the topsy-turvyness of a college football season. Two different TT factors are calculated: one for each of week of the season, referred to as the Week TT factor, and one for the cumulative topsy-turvyness at the end of each week of the season, referred to as the Season TT factor.

The method to find the Week TT Factor is based on comparing the AP Top 25 poll rankings of schools from the current week to that of the previous week. The difference in the rankings of each school in the AP Top 25 from the current week to the previous week is squared. How do we account for teams that fall out of the rankings? A team that gets unranked from the previous week is assumed as having become the No. 26 team in the current week. All the squares of the differences in the rankings are then added together and normalized on a scale of 0-100.

The other TT factor, the Season TT factor is also calculated for the end of each week to gage how topsy-turvy the season has been so far. The Season TT factor is calculated using weighted averages of the Week TT factors. As the season progresses, the Week TT factors are given more weight in the calculation of the Season TT factor because toward the end of the season, an upset of a ranked team is more topsy-turvy than an upset in the beginning of the season when the strength of a ranked team is less established.

Season End of Season TT factor

2007 ……………..60

2006 ……………..46

2005 ……………..48

2004 ……………..40

2003 ……………. 55

2002 ……………. 48

Table 1: End of Season TT factors for 2002-2007 Seasons

Table 1 shows the end of Season TT factor of the last six football seasons. It is clear that 2007 was the most topsy-turvy season in recent history, with the 2003 season not too far behind. In contrast, the 2004 season was the least topsy-turvy.

Read the complete paper including formulas and detailed analysis at

http://www.eng.usf.edu/~kaw/TT_factor_paper_media.pdf

Can you write a program in MATLAB or any other language to find the Week TT factors and the Season TT factors?

_____________________________________________________

This post is brought to you by Holistic Numerical Methods: Numerical Methods for the STEM undergraduate at http://nm.mathforcollege.com

Subscribe to the blog via a reader or email to stay updated with this blog. Let the information follow you.

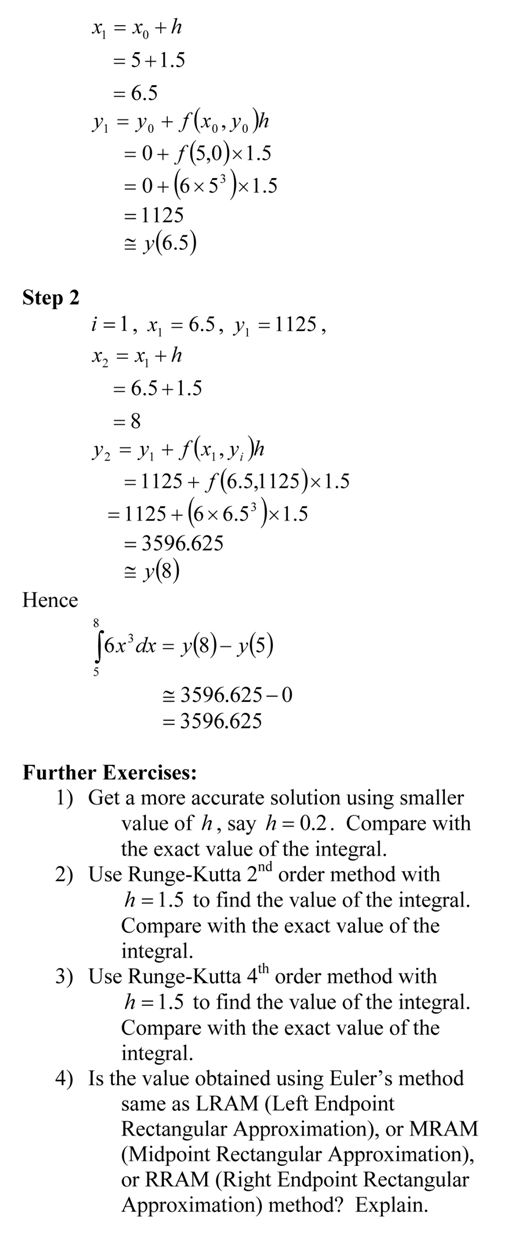

Exercises assigned to the students:

Exercises assigned to the students: